Summary

AI customer service initiatives usually fail not because the model is weak, but because teams deploy chatbots without tying them to real issue resolution, agent trust, and measurable outcomes. The article argues that companies should focus on resolution metrics over deflection, treat AI as a full service stack rather than a single bot, and strengthen their knowledge base, workflow selection, and agent experience. It also stresses the need for governance, guardrails, and meaningful metrics like first contact resolution, reopen rate, CSAT, and cost per resolution instead of vanity KPIs. The recommended path is to start small with one high-volume workflow, run a controlled pilot, and improve based on weekly reviews of failures and gaps.

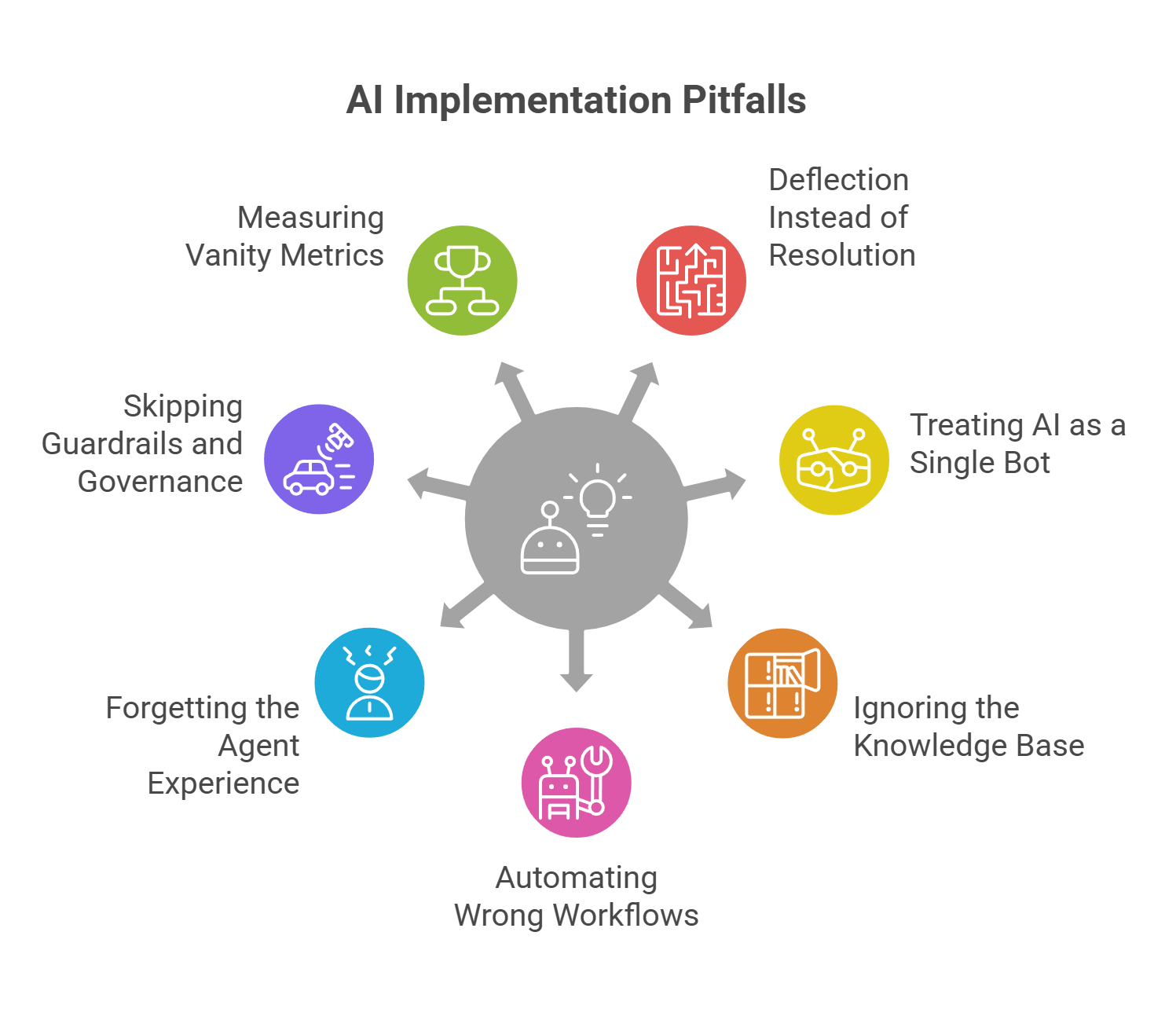

Most teams don’t fail with AI for customer service because the model is "bad." They fail because they launch a chatbot, call it a transformation, and never connect it to real resolution. The result? Customers get stuck in loops. Agents lose trust. Leaders walk away believing AI in customer support is overhyped

The fix is simple (not easy): stop optimizing for novelty and start optimizing for outcomes. Below are seven AI customer service mistakes and the practical moves big SaaS/PaaS teams use to turn AI into measurable service performance.

Mistake #1: Chasing deflection instead of resolution

Deflection looks good in dashboards, but it often hurts the customer experience. In AI-powered customer service, the real north star is resolution: Was the issue solved correctly and quickly?'

What to do instead:

- Track resolution rate, time-to-resolution, and reopen rate (not just bot containment).

- Design a frictionless escape hatch: one tap to agent, with context carried over.

Mistake #2: Treating AI like a single bot

Effective AI customer support is not a bot - it’s a system. Customers need answers, agents need help, and operations need control. A standalone chat widget can’t deliver all three.

Do instead: Build an AI service stack

- Assist: summaries, suggested replies, next-best actions for agents.

- Self-serve: guided troubleshooting and knowledge Q&A.

- Automate: safe actions across systems (with approvals where requied).

- Analyze: trend detection, knowledge gaps, and coaching signals.

Mistake #3: Ignoring the knowledge base

If your knowledge is outdated, AI will answer confidently and incorrectly. The fastest way to improve AI accuracy in customer service is often not a new model—it’s better knowledge hygiene.

Do instead: Create a minimum viable knowledge foundation

- Assign owners and freshness SLAs for high-traffic articles.

- Standardize issue taxonomy (categories, intents, outcomes).

- Add a feedback loop: every escalation tags missing or incorrect content

Mistake #4: Automating the wrong workflows

Teams often start with the messiest cases—complaints, billing disputes, policy exceptions—because they feel high impact. That’s exactly where mistakes are most expensive.

Do instead: Start with boring wins

- Intelligent triage and routing (intent, urgency, language, sentiment signals).

- Auto-summaries and timelines for long-running cases.

- Status checks, appointment changes, password resets, order updates.

Mistake #5: Forgetting the agent experience

If agents don’t trust the AI, it won’t be used—and AI adoption will stall.. This is a product experience problem, not a training issue.

Do instead: Make AI assistance feel effortless

- One-click insertion for suggested replies and summaries.

- Visible sources and citations for fast verification.

- Add thumbs-up/down + reason codes to continuously improve quality.

Mistake #6: Skipping guardrails and governance

In customer service, a single wrong answer can become a screenshot. AI for customer service needs boundaries as much as intelligence.

Do instead: Ship with guardrails from day one

- Confidence thresholds: low confidence triggers clarification or escalation.

- Approved actions only: the AI can suggest broadly, execute selectively.

- Privacy controls: PII redaction, role-based access, audit logs.

Mistake #7: Measuring vanity metrics

Metrics like “bot conversations” or “deflection rate” don’t prove success. You need metrics tied to customer outcomes and operational health.

Do instead: Measure impact where it matters

- Experience: CSAT/CES by cohort, sentiment recovery after resolution.

- Operations: first contact resolution, reopen rate, transfers, backlog.

- Economics: Cost per resolution, Time to productivity, Human-free resolution rate (HFR).

A lightweight ROI framework

To justify AI in customer support, keep the math simple and conservative. Focus on three levers:

- Handle time reduction: agent assist cuts research and wrap-up.

- Fewer repeats: better triage and knowledge reduces reopens/transfers.

- More self-serve completions: customers resolve simpleissues without waiting.

Validate with a pilot: pick 1–2 high-volume workflows, run for 2–4 weeks, and compare the metrics against a control group.

Your next 30 days: one workflow, fully instrumented

If you want AI for customer service that works in production, start narrow: choose one repetitive workflow, define success metrics, and launch with guardrails. Review failures weekly: missing knowledge, wrong routing, unclear policies, or unsafe automation.

AI won’t replace your support org. But with the right stack, governance, and measurement, it will make your team faster, more consistent, and easier to scale—without sacrificing trust.